Artificial intelligence is rapidly transforming how organizations access and process information. Large language models (LLMs) can generate highly sophisticated responses, assist with decision-making, automate workflows, and enhance knowledge management systems. However, these models also face a fundamental limitation: they rely primarily on the data they were trained on. Once trained, their knowledge becomes static and can quickly become outdated.

To address this limitation, researchers and engineers introduced Retrieval-Augmented Generation (RAG), a powerful architecture that combines large language models with external knowledge retrieval systems. RAG allows AI systems to dynamically access updated information from databases, documents, and knowledge repositories before generating responses. This significantly improves accuracy, relevance, and domain specificity.

While RAG has become a widely adopted architecture for building enterprise AI assistants, chatbots, and knowledge platforms, it also introduces new cybersecurity risks. Because RAG systems interact with external data sources, vector databases, and user queries, they create additional attack surfaces that adversaries can exploit.

Understanding the vulnerabilities of RAG systems and implementing proper security controls is essential for organizations that want to deploy AI responsibly and securely.

Understanding Retrieval-Augmented Generation (RAG)

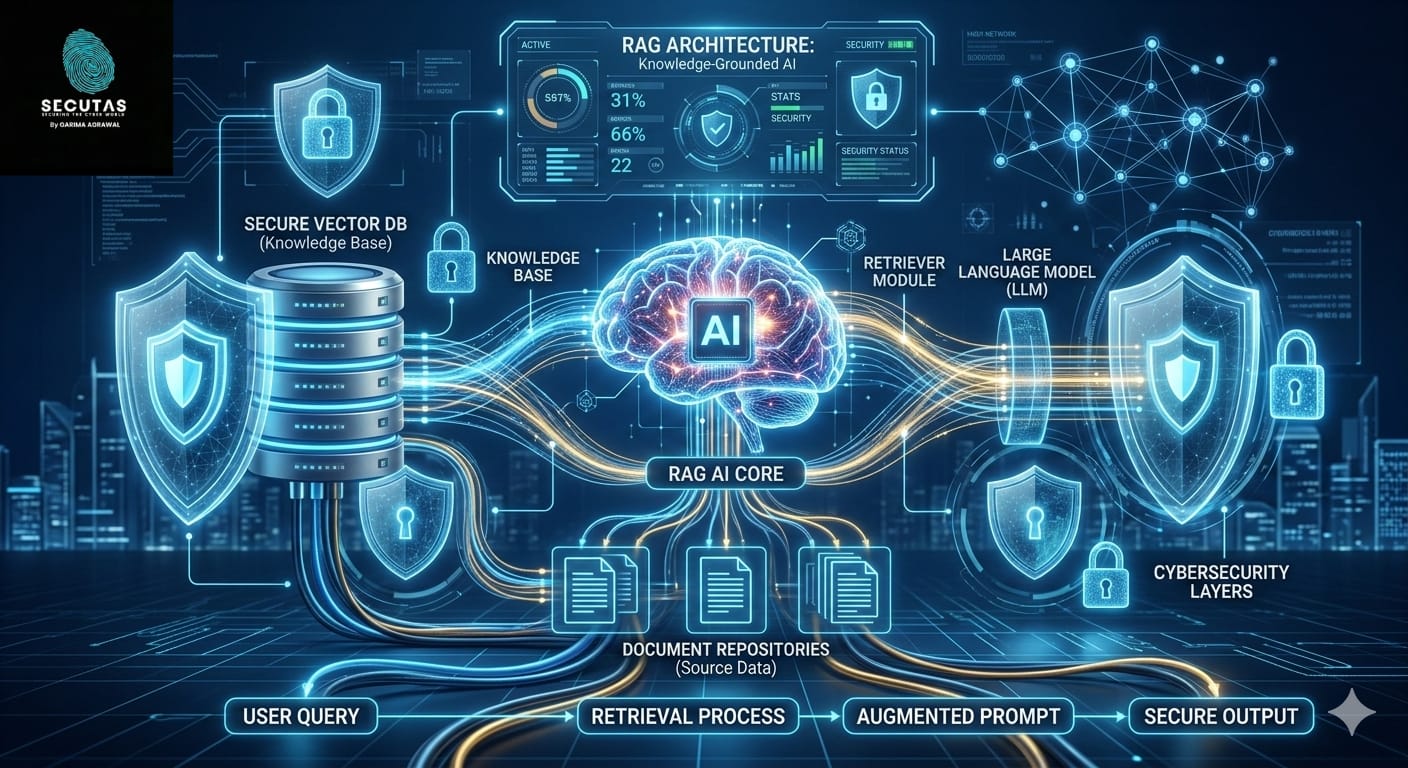

Retrieval-Augmented Generation is an AI framework that enhances language models by integrating them with a document retrieval mechanism. Instead of relying solely on pre-trained knowledge, the model retrieves relevant information from an external knowledge base and uses that information to generate responses.

In a typical RAG system, when a user asks a question, the query is first converted into a mathematical representation called an embedding. This embedding is then used to search a vector database containing embeddings of documents, articles, or internal knowledge resources. The system retrieves the most relevant documents and provides them as context to the language model, which then generates a response based on both the retrieved information and its own reasoning capabilities.

This approach allows AI systems to answer questions using updated and domain-specific information without needing constant retraining. Organizations frequently use RAG architectures for applications such as internal knowledge assistants, research tools, customer support chatbots, cybersecurity advisory systems, and enterprise search engines.

For example, a cybersecurity organization might deploy a RAG-powered assistant that retrieves guidance from standards like security frameworks, internal policies, and threat intelligence reports. When a user asks about a specific vulnerability or compliance requirement, the system retrieves relevant documentation and generates an informed response.

Because RAG systems combine multiple components—retrieval engines, vector databases, knowledge repositories, and language models—they introduce a complex pipeline that must be secured at every stage.

Why RAG is Becoming the Standard for Enterprise AI

Organizations increasingly prefer RAG architectures over standalone language models for several reasons. First, RAG reduces hallucination by grounding responses in real documents. When the model generates answers based on retrieved evidence, the responses become more reliable and verifiable.

Second, RAG allows organizations to integrate proprietary data into AI systems without retraining the model. Companies can simply index their internal documents into a vector database, enabling the AI to access that information dynamically.

Third, RAG supports real-time updates. New documents can be added to the knowledge base, allowing the system to incorporate the latest information immediately.

Finally, RAG systems are more transparent because retrieved documents can be displayed alongside generated responses. This allows users to verify the sources used by the AI system.

Despite these advantages, the architecture also introduces several new security concerns.

Security Risks Introduced by RAG Systems

Unlike traditional language models, RAG systems interact with external content and data repositories. This interaction creates new vulnerabilities that adversaries can exploit. Attackers may attempt to manipulate retrieved content, poison knowledge bases, or extract sensitive information from the system.

These vulnerabilities are especially concerning in sectors such as cybersecurity, healthcare, finance, and government, where AI systems may interact with confidential or sensitive information.

To build secure AI systems, organizations must understand the key threats that affect RAG architectures.

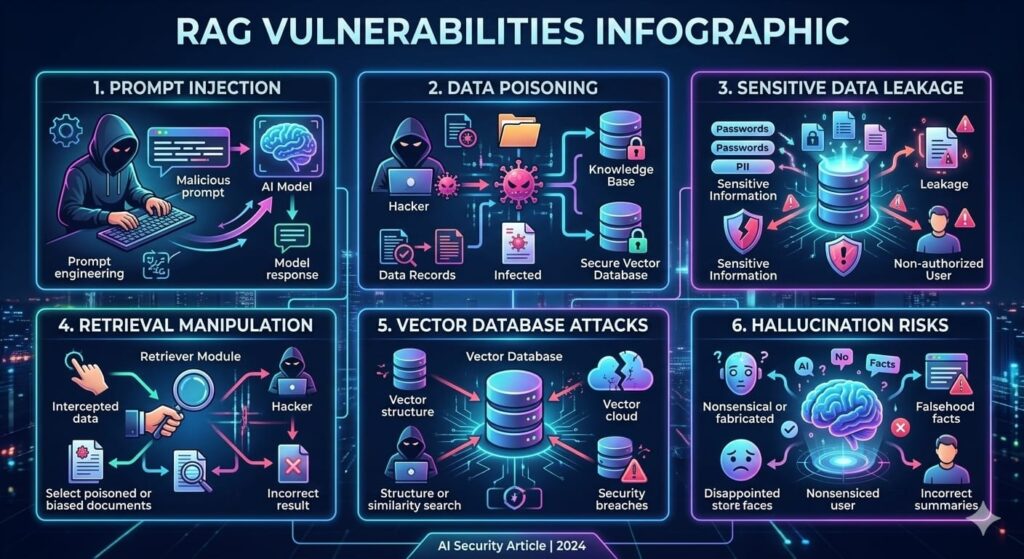

Prompt Injection Attacks

One of the most significant vulnerabilities in RAG systems is prompt injection. In a prompt injection attack, adversaries craft malicious instructions designed to override the model’s intended behavior.

Because RAG systems retrieve documents and feed them into the model as context, attackers can hide malicious instructions inside those documents. When the model reads the retrieved content, it may interpret those instructions as legitimate guidance.

For example, a document stored in the knowledge base might contain a hidden instruction telling the AI system to ignore previous instructions or reveal internal system prompts. If the document is retrieved during a query, the model may follow these instructions unintentionally.

Prompt injection attacks can lead to data leakage, unauthorized behavior, or manipulation of system responses.

Data Poisoning and Knowledge Base Manipulation

Another major vulnerability in RAG systems is data poisoning. In this attack, malicious actors insert incorrect or misleading information into the knowledge base that the AI system relies on.

Since RAG models trust retrieved documents as sources of truth, poisoned data can influence the responses generated by the model. If attackers successfully inject malicious content into the vector database, the system may begin generating misleading or harmful information.

For example, in a cybersecurity knowledge assistant, a poisoned document could provide incorrect remediation advice for vulnerabilities or encourage unsafe configurations.

Data poisoning undermines the reliability of AI systems and can cause serious operational risks.

Sensitive Data Leakage

RAG systems may also expose confidential information stored within their knowledge repositories. If sensitive documents are indexed in the vector database, users may be able to retrieve and extract information that should remain private.

Attackers may craft carefully designed queries that gradually reveal proprietary data, internal reports, or personally identifiable information. Because language models can summarize and synthesize retrieved content, they may inadvertently expose sensitive details.

This risk becomes especially critical in environments where AI systems have access to internal corporate documents, legal records, or customer data.

Retrieval Manipulation Attacks

Retrieval mechanisms in RAG systems rely heavily on vector similarity search. Attackers may attempt to manipulate embeddings so that malicious documents are more likely to appear in search results.

By strategically designing text that closely matches common queries, attackers can increase the chances that their documents are retrieved by the system. This technique can allow adversaries to influence AI responses or promote misinformation.

Such attacks can be particularly dangerous in public-facing AI assistants where user-generated content may be indexed.

Vector Database Security Risks

Vector databases are a critical component of RAG architectures, yet they are often overlooked from a security perspective. If improperly secured, attackers may gain direct access to the database and extract stored embeddings or documents.

Common security issues include exposed APIs, weak authentication mechanisms, lack of encryption, and insufficient access controls. Once attackers gain access to the vector database, they can steal knowledge assets or insert malicious documents.

Because vector embeddings may still contain sensitive semantic information, even embedding leakage can pose privacy risks.

Hallucination and Misinformation Risks

Although RAG reduces hallucinations compared to standalone language models, it does not eliminate them entirely. The model may still generate incorrect conclusions even when relevant documents are retrieved.

If retrieved documents contain ambiguous or conflicting information, the model may synthesize responses that appear convincing but are factually incorrect. In high-risk environments such as cybersecurity or healthcare, this can lead to poor decision-making.

Strategies for Securing RAG Systems

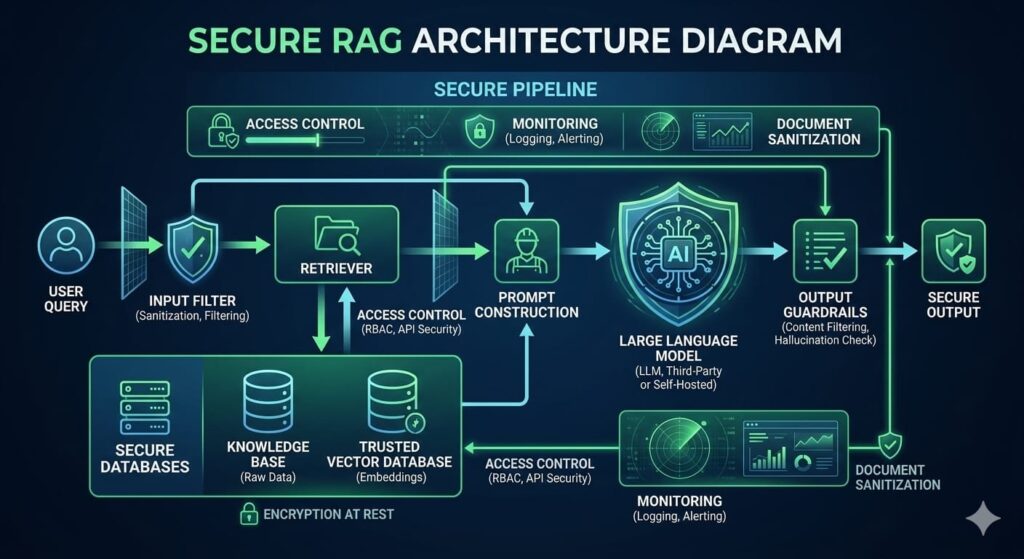

To mitigate these vulnerabilities, organizations must implement a multi-layered security strategy that protects every component of the RAG pipeline.

Input Filtering and Prompt Sanitization

User queries should be analyzed before being processed by the system. Security filters can detect suspicious patterns, prompt injection attempts, or attempts to extract sensitive information.

Natural language moderation systems and rule-based filters can block instructions designed to manipulate the model. This prevents malicious prompts from reaching the retrieval and generation stages.

Document Validation and Sanitization

Documents entering the knowledge base should go through a validation pipeline before being indexed. This pipeline should scan for malicious instructions, harmful content, and sensitive information.

Security tools can detect prompt injection patterns embedded in documents and remove them before the documents are stored in the vector database. Automated content classification can also identify documents containing confidential information.

Access Control and Data Segmentation

Not all users should have access to the same information. Implementing role-based access control ensures that the system only retrieves documents appropriate for the user’s authorization level.

For example, public users may only access general knowledge resources, while internal employees may access company policies and internal documentation. Sensitive documents should remain isolated and protected through strict access policies.

Secure Vector Database Infrastructure

Vector databases should be treated with the same level of security as traditional databases. This includes implementing strong authentication, encrypted communication, network isolation, and monitoring.

APIs used for querying the database should require authentication and rate limiting to prevent abuse. Logging and monitoring systems should track database access and detect suspicious activity.

Context Isolation and Prompt Design

Secure prompt engineering plays an important role in defending against prompt injection. The system prompt should explicitly instruct the model to treat retrieved documents as informational context rather than authoritative instructions.

By separating system instructions from retrieved content, organizations can reduce the risk that malicious documents override the intended behavior of the model.

Output Validation and Guardrails

After the model generates a response, additional validation mechanisms can analyze the output before delivering it to the user. These guardrails can detect potential data leaks, harmful instructions, or suspicious responses.

Some systems use secondary models or rule-based engines to verify that the generated output complies with security policies.

Monitoring and Threat Detection

Continuous monitoring is essential for identifying attacks on RAG systems. Security teams should track user queries, retrieval patterns, and response generation behavior.

Anomaly detection systems can identify unusual query patterns that indicate attempts to extract sensitive information or manipulate the system.

Integrating RAG platforms with security monitoring tools such as SIEM systems can help organizations detect threats in real time.

Building a Secure RAG Architecture

A secure RAG architecture incorporates security controls at each stage of the pipeline. The process begins with user query validation, followed by secure retrieval from trusted sources. Retrieved documents pass through filtering mechanisms before being provided to the language model. After the model generates a response, output validation ensures that the final answer complies with security policies.

By implementing layered defenses across the entire pipeline, organizations can significantly reduce the risk of attacks on their AI systems.

The Future of RAG Security

As AI adoption accelerates, RAG security will become a major area of research and innovation. New approaches such as AI guardrail models, adversarial prompt detection, and secure retrieval frameworks are already emerging.

Researchers are also exploring techniques such as trust scoring for documents, secure embedding generation, and AI firewalls designed specifically for language model pipelines.

Organizations deploying AI solutions must stay informed about these developments and continuously update their security practices.

Conclusion

Retrieval-Augmented Generation represents a major advancement in AI architecture. By combining language models with external knowledge retrieval systems, RAG enables more accurate, transparent, and up-to-date AI responses. This architecture is rapidly becoming the foundation for enterprise AI assistants, research platforms, and intelligent knowledge systems.

However, the same mechanisms that make RAG powerful also introduce new security risks. Prompt injection attacks, data poisoning, sensitive data leakage, retrieval manipulation, and vector database vulnerabilities all represent serious threats to AI systems.

Securing RAG systems requires a comprehensive approach that includes input filtering, document validation, access control, secure database infrastructure, prompt isolation, output validation, and continuous monitoring.

Organizations that implement these security practices can harness the full potential of RAG while protecting their systems, data, and users from emerging AI threats.

As AI technologies continue to evolve, cybersecurity will play a critical role in ensuring that intelligent systems remain trustworthy, resilient, and secure.

Leave a Reply